Applies To:

Show Versions

BIG-IP AAM

- 12.1.0

BIG-IP APM

- 12.1.0

BIG-IP LTM

- 12.1.0

BIG-IP DNS

- 12.1.0

BIG-IP ASM

- 12.1.0

Introduction to the vCMP System

What is vCMP?

Virtual Clustered Multiprocessing™ (vCMP®) is a feature of the BIG-IP® system that allows you to provision and manage multiple, hosted instances of the BIG-IP software on a single hardware platform. A vCMP hypervisor allocates a dedicated amount of CPU, memory, and storage to each BIG-IP instance. As a vCMP system administrator, you can create BIG-IP instances and then delegate the management of the BIG-IP software within each instance to individual administrators.

A key part of the vCMP system is its built-in flexible resource allocation feature. With flexible resource allocation, you can instruct the hypervisor to allocate a different amount of resource, in the form of cores, to each BIG-IP instance, according to the particular needs of that instance. Each core that the hypervisor allocates contains a fixed portion of system CPU and memory.

Furthermore, whenever you add blades to the VIPRION® cluster, properly-configured BIG-IP instances can take advantage of those additional CPU and memory resources without traffic interruption.

At a high level, the vCMP system includes two main components:

- vCMP host

- The vCMP host is the system-wide hypervisor that makes it possible for you to create and view BIG-IP instances, known as guests. Through the vCMP host, you can also perform tasks such as creating trunks and VLANs, and managing guest properties. For each guest, the vCMP host allocates system resources, such as CPU and memory, according to the particular resource needs of the guest.

- vCMP guests

- A vCMP guest is an instance of the BIG-IP software that you create on the vCMP system for the purpose of provisioning one or more BIG-IP® modules to process application traffic. A guest consists of a TMOS® instance, plus one or more BIG-IP modules. Each guest has its own share of hardware resources that the vCMP host allocates to the guest, as well as its own management IP addresses, self IP addresses, virtual servers, and so on. In this way, each guest effectively functions as its own multi-blade VIPRION® cluster, configured to receive and process application traffic with no knowledge of other guests on the system. Furthermore, each guest can use TMOS® features such as route domains and administrative partitions to create its own multi-tenant configuration. Each guest requires its own guest administrator to provision, configure, and manage BIG-IP modules within the guest. The maximum number of guests that a fully-populated chassis can support varies by chassis and blade platform.

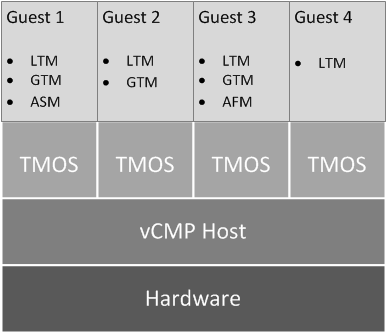

This illustration shows a basic vCMP system with a host and four guests. Note that each guest has a different set of modules provisioned, depending on the guest's particular traffic requirements.

Example of a four-guest vCMP system

Other vCMP system components

In addition to the host and guests, the vCMP® system includes these components:

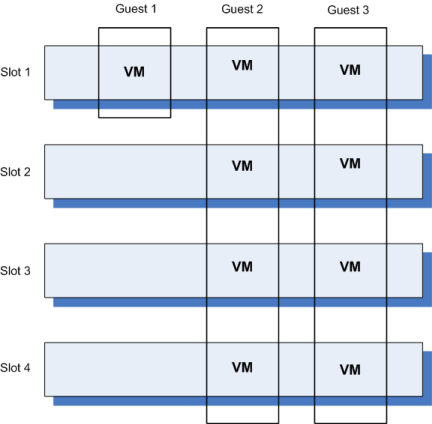

- Virtual machine

- A virtual machine (VM) is an instance of a guest that resides on a slot and

functions as a member of the guest's virtual cluster. This illustration shows a system with

guests, each with one or more VMs.

Guest VMs as cluster members

- Virtual disk

- A virtual disk is the portion of disk space on a slot that the system allocates to a guest VM. A virtual disk image is typically a 100 gigabyte sparse file. For example, if a guest spans three slots, the system creates three virtual disks for that guest, one for each blade on which the guest is provisioned. Each virtual disk is implemented as an image file with an .img extension, such as guest_A.img.

- Core

- A core is a portion of a blade's CPU and memory that the vCMP host allocates to a guest. The amount of CPU and memory that a core provides varies by blade platform.

Supported BIG-IP system versions

On a vCMP® system, the host and guests can generally run any combination of BIG-IP® 11.x software. For example, in a three-guest configuration, the host can run version 11.2.1, while guests run 11.2, 11.3, and 11.4. With this type of version support, you can run multiple versions of the BIG-IP software simultaneously for testing, migration staging, or environment consolidation.

The exact combination of host and guest BIG-IP versions that F5 Networks® supports varies by blade platform. For details, see the vCMP host and supported guest version matrix on the AskF5 Knowledge Base at http://support.f5.com.

BIG-IP license considerations for vCMP

The BIG-IP® system license authorizes you to provision the vCMP® feature and create guests with one or more BIG-IP system modules provisioned. Note the following considerations:

- Each guest inherits the license of the vCMP host.

- The host license must include all BIG-IP modules that are to be provisioned across all guest instances. Examples of BIG-IP modules are BIG-IP Local Traffic Manager™ and BIG-IP Global Traffic Manager™.

- The license allows you to deploy the maximum number of guests that the specific blade platform allows.

- If the license includes the Appliance mode feature, you cannot enable Appliance mode for individual guests; when licensed, Appliance mode applies to all guests and cannot be disabled.

You activate the BIG-IP system license when you initially set up the vCMP host.

vCMP provisioning

To enable the vCMP® feature, you perform two levels of provisioning. First, you provision the vCMP feature as a whole. When you do this, the BIG-IP® system, by default, dedicates most of the disk space to running the vCMP feature, and in the process, creates the host portion of the vCMP system. Second, once you have configured the host to create the guests, each guest administrator logs in to the relevant guest and provisions the required BIG-IP modules. In this way, each guest can run a different combination of modules. For example, one guest can run BIG-IP® Local Traffic Manager™ (LTM®) only, while a second guest can run LTM® and BIG-IP ASM™.

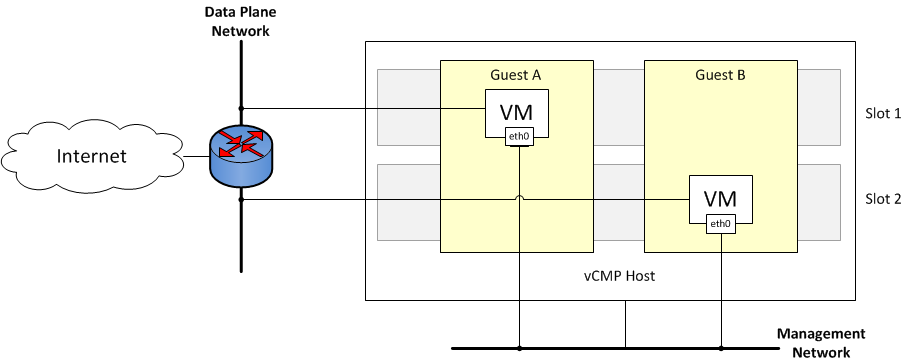

Network isolation

The vCMP® system separates the data plane network from the management network. That is, the host operates with the hardware switch fabric to control the guest data plane traffic. Each slot in the chassis has its own network interface for data plane traffic that is separate from the management network. This separation of the data plane network from the management network provides true multi-tenancy by ensuring that traffic for a guest remains separate from all other guest traffic on the system.

The following illustration shows the separation of the data plane network from the management network.

Isolation of the data plane network from the management network

System administration overview

Administering a vCMP® system requires two distinct types of administrators: a vCMP host administrator who creates guests and allocates resources to those guests, and a vCMP guest administrator who provisions and configures BIG-IP modules within a specific guest.

At a minimum, these tasks must be performed on the vCMP host, by a host administrator:

- Provision the vCMP feature

- Create vCMP guests, including allocating system resources to each guest

- Create and manage VLANs

- Create and manage trunks

- Manage interfaces

- Configure access control to the host by other host administrators, through user accounts and roles, partition access, and so on

These tasks are performed on a vCMP guest by a guest administrator:

- Provision BIG-IP modules

- Create self IP addresses and associate them with host VLANs

- Create and manage features within BIG-IP modules, such as virtual servers, pools, policies, and so on

- Configure device service clustering (DSC)

- Configure access control to the guest by other guest administrators, through user accounts and roles, partition access, and so on

After you initially set up the vCMP host, you will have a standalone, multi-tenant vCMP system with some number of guests defined. A guest administrator will then be ready to provision and configure the BIG-IP modules within a guest to process application traffic. Optionally, if the host administrator has set up a second chassis with equivalent guests, a guest administrator can configure high availability for any two equivalent guests.

Guest access to the management network

As a vCMP host administrator, you can configure each vCMP® guest to be either bridged to or isolated from the management network, or to be isolated from the management network but remain accessible by way of the host-only interface.

About bridged guests

When you create a vCMP® guest, you can specify that the guest is a bridged guest. A bridged guest is one that is connected to the management network. This is the default network state for a vCMP guest. This network state bridges the guest's virtual management interface to the physical management interface of the blade on which the guest virtual machine (VM) is running.

You typically log in to a bridged guest using its cluster management IP address, and by default, guest administrators with the relevant permissions on their user accounts have access to the bash shell, the BIG-IP® Configuration utility, and the Traffic Management Shell (tmsh). However, if per-guest Appliance mode is enabled on the guest, administrators have access to the BIG-IP Configuration utility and tmsh only.

Although the guest and the host share the host's Ethernet interface, the guest appears as a separate device on the local network, with its own MAC address and IP address.

Note that changing the network state of a guest from isolated to bridged causes the vCMP host to dynamically add the guest's management interface to the bridged management network. This immediately connects all of the guest's VMs to the physical management network.

About isolated guests

When you create a vCMP® guest, you can specify that the guest is an isolated guest. Unlike a bridged guest, an isolated guest is disconnected from the management network. As such, the guest cannot communicate with other guests on the system. Also, because an isolated guest has no management IP address for administrators to use to access the guest, the host administrator, after creating the guest, must use the vconsole utility to log in to the guest and create a self IP address that guest administrators can then use to access the guest.

About Appliance mode

Appliance mode is a BIG-IP system feature that adds a layer of security in two ways:

- By preventing administrators from using the root user account.

- By granting administrators access to the Traffic Management Shell (tmsh) instead of and the advanced (bash) shell.

You can implement Appliance mode in one of two ways:

- System-wide through the BIG-IP license

- You can implement Appliance mode on a system-wide basis through the BIG-IP® system license. However, this solution might not be ideal for a vCMP® system. When a vCMP system is licensed for Appliance mode, administrators for all guests on the system are subject to Appliance mode restrictions. Also, you cannot disable the Appliance mode feature when it is included in the BIG-IP system license.

- On a per-guest basis

- Instead of licensing the system for Appliance mode, you can enable or disable the appliance mode feature for each guest individually. By default, per-guest Appliance mode is disabled when you create the guest. After Appliance mode is enabled, you can disable or re-enable this feature on a guest at any time.

User access restrictions with Appliance mode

When you enable Appliance mode on a guest, the system enhances security by preventing administrators from accessing the root-level advanced shell (bash).

- For bridged guests

- For a bridged guest with Appliance mode enabled, administrators can access the guest through the guest's management IP address. Administrators for a bridged guest can manage the guest using the BIG-IP® Configuration utility and tmsh.

- For isolated guests

- For an isolated guest with Appliance mode enabled, administrators must access a guest through one of the guest's self IP addresses, configured with appropriate port lockdown values. Administrators for an isolated guest can manage the guest using the BIG-IP Configuration utility and tmsh.

BIG-IP version restrictions with Appliance mode

If you want to use the BIG-IP® version 11.5 Appliance mode feature on a guest, both the host and the guest must run BIG-IP version 11.5 or later.